By Rick Strahl

http://www.west-wind.com/

Last update: November 5, 1999

Microsoft has been on course to integrate into the Enterprise for a few years now and there's been hard push at the company to provide tools to make the move to scalable server based applications as easy as possible for developers. One of the main components that figures heavily in Microsoft's WinDNA (Windows interNet Architecture) plans is Microsoft Transaction Server. This tool defies easy classifications as it provides a host of useful and powerful functionalities that have traditionally been very difficult to program. This very diversity is one of the reasons MTS is often misunderstood and difficult to get a handle on. In this article I'll address several of the key features and what they mean to VFP developers.

This document covers the following topics:

¨ An overview of Microsoft Transaction Servers main features

¨ How MTS works

¨ The impact of Transaction Server in server based applications

¨ How to create VFP COM components for use in MTS

¨ Specific discussions of several technologies in MTS:

¨ Just In Time Activation

¨ Distributed Transaction Management

¨ Role based security

¨ An analysis of what these features actually mean for VFP applications

So, you've been building applications that use COM. You have a few server applications that are humming away but in the back of your mind you're thinking: "I don't really know what to do when the time comes to scale this application both on the local machine and across the network." The focus of Microsoft Transaction Server is in helping making this process easier by providing an abstraction layer that handles many of these details for you. You've probably heard about 3 and n-tier programming, which should contain middle tier servers. One of the tasks of a middle tier component is to be able to abstract the scalability issues – MTS can fill this place rather nicely by hosting your components.

MTS accomplishes most of its functionality through a concept called packages (to be renamed COM+ applications in Windows 2000). Packages are host containers for COM components. To create a COM component that runs in MTS you create the component and then add it to one of these packages. A package is a grouping of components that share the package's attributes. The package provides a common context to all components that reside within it. The context allows MTS to manage the components inside, rather than allowing your application to access them directly. It does so through an abstraction layer that relies on COM marshalling and remapping registry entries of your components to itself. When a component is invoked by a client (using CREATEOBJECT() or the CoCreateInstance() API just like you always do), the MTS package is activated instead and the MTS package then in turn creates your COM object and passes any calls to methods and properties forward to the actual component running within the package context.

If you're thinking this will slow down the performance of your COM components, you're right. MTS packages are typically implemented Out Of Process, which requires all the overhead of an EXE server, plus additional overhead for the package to call your component. However, some of the overhead is gained back through some caching that occurs inside of the MTS package as it has the ability to manage the components running inside of it.

The good news is that MTS can potentially provide a number of serious scalability improvements like pool management and resource sharing. The bad news is that MTS in its current version doesn't do much of this yet. What it does provide though, through this abstraction layer is functionality that's very easy to access and implement from within your applications. Some of this functionality has been traditionally very hard to implement – MTS can make this task almost easy.

Let's look at what functionality you can gain by running inside of a Transaction Server package. Not an easy task as even a passing discussion of all of the functionality would cover a full book. MTS appears to have so much seemingly unrelated functionality wrapped up into a single product. However, all of this functionality relies heavily on the packaging mechanism just discussed and so it makes sense to provide it all within the scope of MTS.

MTS wraps core functionality that has to do with building scalable applications and implements functionality that has been mainly implemented for large mainframe systems. It provides functionality in a large variety of areas starting with scalability, distributed transaction management for SQL, a simple security model, COM object packaging, server based context management and adminstration and monitoring features. Let's look at a few if these in detail.

¨

Scalability and Resource management of COM

objects—Just In Time Activation (JITA)

This feature is probably the

most important one that developers are looking for as a feature from MTS. COM

objects loaded through MTS can be automatically unloaded and reloaded. MTS

caches the VFP runtime, so load and reload is relatively fast. MTS may also

cache COM references of your object, so if objects are loaded in quick cycles

your servers are never unloaded/reloaded even though your client code releases

references. MTS handles all of this through its own abstraction layer, saving

resources on the server. But there is a cost: COM operation through MTS can be

considerably slower than calling COM objects directly depending on how you

implement MTS functionality in your components.

¨

Distributed Transaction Management

This is where MTS gets its name.

MTS allows database-independent management of transactions spanning multiple

data sources and multiple COM objects. Essentially MTS can wrap operations into

its own transactional monitor, which works through the Distributed Transaction

Controller (DTC), causing any ODBC/OleDB database operations to be monitored.

Components can call transactional functions to complete or abort operations, at

which time MTS—rather than the application—causes the data sources to commit or

revert. Data sources must be DTC compliant, which means SQL Server or Oracle at

this time. (This may also apply to other data sources with third-party

drivers.) The Visual FoxPro ODBC driver is not DTC compliant so you can't have

VFP data participate in distributed transactions, but you can of course use VFP

data in your COM objects as long as they don't require DTC transactions.

The key feature of this technology is that transactions have been abstracted to

the COM layer. Rather than coding transactional SQL syntax, you can use

code-based logic to deal with transactions. The transactions are SQL back-end

independent (as long as the back end is DTC capable). Transactions can also

span multiple databases and even across servers running different SQL back

ends, such as SQL Server and Oracle. A single transaction could wrap data

access that writes to both! If you've written code for this type of transaction

before you know how difficult this task is and MTS now makes this a breeze.

¨

Role-based security

MTS implements a new security

model that allows you to configure roles for a component. The roles are

configured at the component level and mapped to NT users. Your code can then

query for the roles under which the component is running, rather than using the

much more complex NT Security model and functions to figure out user identity.

Roles are also useful for deployment because they can be packaged with the

component and can be automatically installed on other machines if the necessary

accounts exist. Roles are similar to NT groups, but they are applied

specifically to a package and span all of the components that live in the

package.

¨

Packaging technology

MTS includes a packaging

mechanism that allows taking a package

(an MTS word for an application or group of objects), which might contain

multiple COM objects, and packing it up into a distribution file. The

distribution file contains all binary files (DLLs, type libraries and support

files) as well as all information about the registry and the security settings

of the component. This package can be installed on another machine for instant

uptime.

¨

Remoting Support

In Process DLL COM objects can

not be natively remoted to another machine. DCOM requires out of process

servers in order to run. With MTS package mechanism the package can be

activated across a network connection which in turn allows your In Process

objects to be run over the network. An additional benefit with this functionality

is the fact that a client is never directly accessing the component on the

other end. If a DCOM connection dies it tends to orphan the running server on

the server machine. With MTS the package can catch connection problems and

automatically release and possibly even reload the server after a connection

has been lost and re-established from the client side.

¨

Context management

MTS includes a static data store

object similar to ASP's Application object to allow components to store data

for state-keeping and inter-component communication.

I've written a number of articles in this magazine that pointed out the serious issues related to the fact that the Visual FoxPro runtime was unable to handle multiple simultaneous method calls. Almost any IIS article I had to postscript with a scalability warning. This meant that ASP pages and MTS requests had to wait for another method call to finish before they could be processed resulting in very limited load capability of Visual FoxPro COM objects in a multithreaded environment such as IIS.

Well, no more! With Service Pack 3 of Visual Studio, Microsoft has totally revamped the VFP runtime with a new multi-threaded version in a new seperately available file called vfp6t.dll. This new runtime has moved all of VFP's global data into thread local storage that provides the data abstraction needed to run COM objects on different threads totally independent of each other. Since each instance gets its own local data there's no interference between a 'global' instance of VFP as there was (and still is with the 'standard runtime') with previous versions. The result is that VFP finally is capable of making simultaneous method calls from multi-threaded clients.

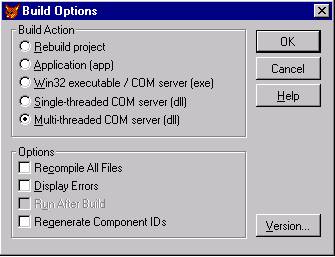

To build components for the new runtime there's a new command BUILD MTDLL, which builds a DLL that links with the new vfp6t.dll file. A new project build option is also available which accomplishes the same task:

If you're using Visual FoxPro with Active Server Pages, MTS or any other multi-threaded client you'll want to upgrade to SP3 as soon as possible.

Keep in mind though that although multi-threading is crucially important for scaling applications, that it's not a silver bullet that will solve all your problems. In fact, you'll possibly face other more serious issues like possible server overloading due to too many requests processing simultaneously and overloading the resources available on your server. Multi-threading can also cause operations to slow down as NT's thread management and simultaneous operation of requests can significantly affect performance. Multi-threading rarely provides better performance, rather it provides better responsiveness where the overall request times may increase, but more people may receive their results in a timely manner. Weigh these things carefully and know your bottlenecks so you don't get surprised by an overloaded server.

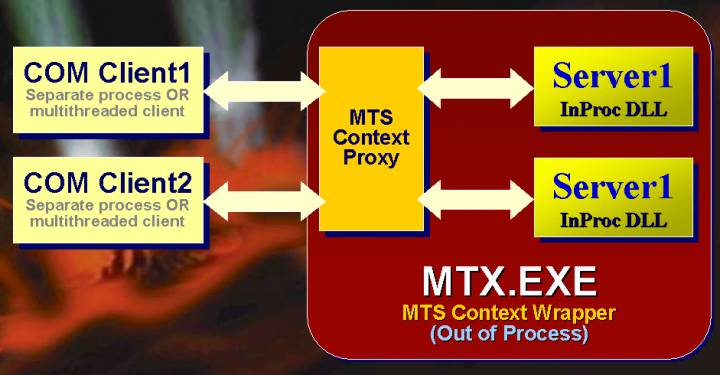

Before you decide to use MTS you should ask yourself whether you really need its functionality. If you don't need any of its specialty features like role-based security or multi-phase commit of database transactions, there might be no good reason to bother with it. Although scalability is one of the often touted features of MTS, the current release of MTS does not provide much in this area at this time. Even when running the new multi-threaded VFP runtime, MTS is not much more than a wrapper around your COM object. This out-of-process COM object wrapper in turn acts as a proxy and hosts all access to your component via passthrough. All object access occurs through an out-of-process COM call, and there's the additional overhead of passing the data through two interface layers. The result is that running components inside MTS tends to be slower than running them as plain in-process COM objects, without gaining any real scalability benefits at least at this time.

Figure 1.1 –MTS hosts your components inside of its own out of process package container. The proxy passes through all object calls to your actual component. This layer of abstraction allows MTS to manage your components for you.

Here's how it works. MTS hosts your COM objects inside a package, which is an out-of-process component you can see on the Processes tab of the Task Manager running as Mtx.exe. Each package uses its own Mtx.exe host to encapsulate its application COM objects. When an MTS component is installed into MTS, the ClassIds and ProgIds are mapped to the package, which in turn knows how to pass on any interface calls directly to your actual COM components residing inside the package. The end result is that a client application never has a direct connection to your COM object, but always has a proxy reference to the MTS package, which passes through all interface calls.

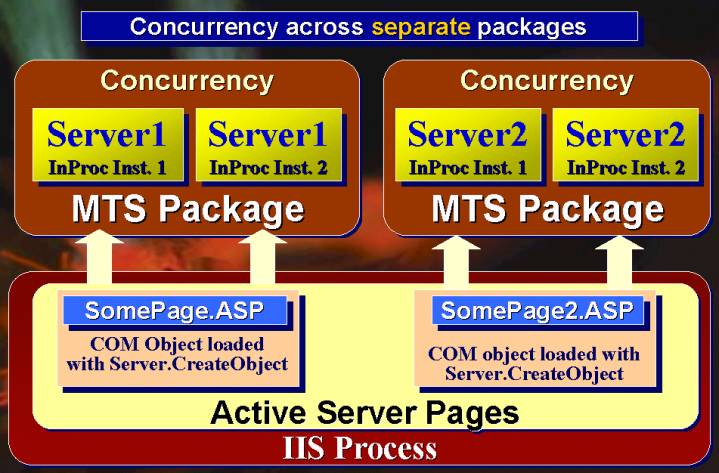

Through some registry mapping, an instance of this object is instantiated when you issue CREATEOBJECT. The package then creates your object and calls the methods in typical COM passthrough fashion using proxy and stub architecture that is also used for cross process and DCOM marshalling of interface access calls. Any method calls made to the component hit the package container's proxy object first, and then are passed down to your internal COM object. Figure 1.1 shows the context object that sits between the client and your component – this context object provides the abstraction layer that MTS uses to provide its features to your COM components. Figure 1.2 shows how objects are called from an ASP page which is a typical example of a multi-threaded client calling your COM components. Note that if multiple simultaneous requests are made on your component, multiple instances start up and run inside the MTS package. How these object references to this package object is provided is up to MTS – it could be a brand new object, or a recycled reference. If you're using the new multi-threaded runtime available with Visual Studio SP3, you'll be able to get simultaneous method calls with the original version of VFP 6.0.

The standard runtime calls to your component will block, essentially allowing only a single method call to occur at a time in a given package. If you use ASP with or without MTS make sure you download and install the new SP3 runtime to get maximum functionality from your VFP COM components (see sidebar).

The MTS package provides component services I mentioned earlier and isolates your component from the client process. (Hey, that's not really a feature since you can do that with out-of-process components on your own—but that's marketing for you.)

Out-of-process packages – known as Server Packages - are the norm, but MTS also supports in-process packages which are called Library Packages. These work the same as out-of-process packages but run inside the client process. However, I've seen problems with this approach, and it seems Microsoft provided this feature more for its own system services (like IIS virtual applications) than anything else. Running components in in-process packages has resulted in many weird and unexplainable crashes and lockups in my tests, where the out-of-process packages of the same exact configuration work fine. Your mileage might vary. In-process packages are slightly faster, but they also void the process-isolation features that protect the client process from a crashing component in your package. You might not be able to restart in-process packages, and they often require the calling process to shut down before refreshing or updating the components. I recommend to stick to Server Packages.

In order to use MTS and gain scalability benefits you have to think about building stateless components. Stateless objects are objects that don't rely on property values to maintain their "state" or context between method calls. These objects make no assumptions about any values for themselves or other "global" aspects of the system. They reestablish state themselves when they start up, by using parameters or values stored in a database or establishing state from scratch. The typical steps for a stateless object are:

1. Receive a method call

2. Establish state

3. Run application logic

4. Release state

5. Return results to the client

The important thing here is that each method call cannot rely on property values being set a certain way when the method is entered. When a server is stateless the server has the ability to recycle or unload the object without the client noticing that underlying server reference is different. And this is exactly what MTS can do behind the scenes for you without you having to write any code to make it happen. This and many other tasks are the responsibility of the package. In theory, this is how MTS can abstract many scalability issues for you, so object management code does not need to be written by a client application. Your job is to create the object and forget about it – MTS will manage the component and makes sure that it's available when you need it. That's the theory anyway, but currently this functionality is very limited and as we'll see in a minute, also very inefficient. This may change in the future though.

Regardless, if you're going to build an effective component for use with MTS, it should be stateless. For MTS this means that when a method call completes it should be able to release the object and hand you a brand-new copy on the next request. In order for this to work, your component cannot rely on non-default property settings used by multiple consecutive method calls.

If the object is stateless, MTS can take the existing object reference and recycle it to pass on to another client. That's the theory, anyway, but in reality MTS destroys your object after each call to release state (which releases state for the object – more on this in a second) and then totally re-creates it when you make another method call, rather than simply caching existing references. MTS does not provide object pooling yet – this is a feature that likely will be provided in later versions of MTS. The current behavior provides a safe environment for legacy (non-MTS) components by always guaranteeing startup state to a stateless component that is not the optimal way to cache objects. Pooling on the other hand would simply keep an instance of an object alive and reuse that existing instance. Pooling is much more efficient, but potentially problematic for legacy objects that don't know about state and MTS context objects.

To manage state MTS introduces a concept called Context. The context object is essentially a reference to the MTS package, which maintains the state of the COM objects inside of it. A COM component can retrieve a reference to this context object with CREATEOBJECT("MTXAS.AppServer.1"). From the object the current package's context can be retrieved with the GetObjectContext() method. This reference lets you control the status of state by committing transactions using the SetComplete() or SetAbort() methods of the context object. When you call the latter methods MTS lets the object go stateless – it actually releases the object reference completely causing the COM object to unload.

Although objects should be stateless, you can also keep state in objects simply by not calling SetComplete() or SetAbort() at the end of a method call. If you do this, the object reference behaves like a typical COM object that is stateful – all the property values and internal object state remain intact between method calls until you finally call SetComplete or SetAbort. This is great for legacy servers that weren't designed for stateless operation to start with. However, stateful servers don't scale well. While servers have state MTS can't manage them. This means they tie up the resources they use and also could hang because of a lost connection or other failure from the client. Because the object isn't managed MTS can't trap these failures as it could with a stateless object.

To demonstrate how MTS works, I'll build a small functional sample that calls a simulated long-running method called SlowHit(). SlowHit() doesn't do anything other than wait for a specified number of seconds passed in as a parameter to simulate multiple simultaneously running requests on this server. To start, create the basic server code:

DEFINE CLASS MTSDemo AS Custom OLEPUBLIC

oMTS = .NULL.

hFile = 0

FUNCTION Init

THIS.WriteOutput("Init Fired")

THIS.oMTS = CREATEOBJECT("MTXAS.AppServer.1")

ENDFUNC

FUNCTION Destroy

THIS.WriteOutput("Destroy Fired")

IF THIS.hFile > 0

FCLOSE(THIS.hFile)

ENDIF

ENDFUNC

************************************************************************

* MTSDemo :: SlowHit

*********************************

*** Function: Allows you to simulate a long request. Pass number of

*** seconds. Use MTS transactions

************************************************************************

FUNCTION SlowHit

LPARAMETER lnSecs

LOCAL loMTS, loContext, x

THIS.Writeoutput("SlowHit Fired" + TRANSFORM(lnSecs))

loContext = THIS.oMTS.GetObjectContext()

lnSecs = IIF(EMPTY(lnSecs), 0, lnSecs)

DECLARE Sleep IN Win32API INTEGER

FOR x = 1 TO lnSecs

FOR x = 1 TO lnSecs

Sleep(1000)

ENDFOR

ENDFOR

DECLARE INTEGER GetCurrentThreadId IN Win32API

IF !ISNULL(loContext)

loContext.SetComplete()

ENDIF

RETURN GetCurrentThreadId()

ENDFUNC

FUNCTION WriteOutput

LPARAMETER lcString

IF THIS.hFile = 0

Declare INTEGER GetCurrentThreadId IN Win32API

THIS.hFile = FCREATE(Sys(2023) + "\MTSDemo" +SYS(2015) + ".txt")

ENDIF

IF THIS.hFile # -1

FWRITE(THIS.hFile, lcString + CHR(13) + CHR(10))

ENDIF

ENDFUNC

ENDDEFINE

A few optional pieces in

this code deal with logging information to disk using the WriteOutput method

—I'll discuss these later. The key feature of a COM object built for use in MTS

is the retrieval of an object reference to the MTXAS.AppServer.1 object, which is the MTS base object. To

retrieve the current package context for the currently active request, use its

GetObjectContext() method and store it to a variable:

THIS.oMTS = CREATEOBJECT("MTXAS.AppServer.1")

loContext = THIS.oMTS.GetObjectContext()

I stuck the

CreateObject() call into the Init event of the class so I don't have to retype

the code that has to run in each call method. As you'll see in a minute, the

Init of your server fires every time a method call is made to your object, so

this is no more efficient than directly calling the same code in each method.

However, it saves some typing and centralizes the code.

The only thing left to

do in your server method code is to call either SetComplete() or SetAbort()—it

doesn't matter which one you call, since this component isn't participating in

any SQL transactions. To tell MTS that it's okay to release resources on the

object, use:

IF !ISNULL(loContext)

loContext.SetComplete()

ENDIF

Now create a project and

add a PRG file (or VCX if you choose to go that route) named MTSDemos. Now compile this server into a

COM object:

BUILD MTDLL MTSDemos FROM MTSDemos

If you don't have the

new multi-threaded runtime included with SP3 (see sidebar), just use BUILD

DLL

instead—but realize your server

will block simultaneous client requests – no concurrent access on that object

will be possible.

Before dumping the

server into MTS, use the following code to test it:

o = CREATEOBJECT("MTSDemos.MTSDemo")

? o.SlowHit(5)

This should run the server, wait for

five seconds and then spit out a thread ID number. If that works, move this

component into MTS by following these steps:

1. Start the Microsoft Management Console.

2. In the tree view, select Microsoft Transaction

Server, then My Computer, then Packages Installed.

3. Click the New icon on the toolbar and create an

empty package named MTS Book Samples (the

name doesn't matter really, but it matches the image below).

4. Select the new package, press the right mouse

key, and click Properties.

5. Click the Identity tab and notice that the

default is set to Interactive User, which is the currently logged-in account.

If your component runs prior to login, the SYSTEM account will be used. Here

you can also change the user account to a specific user account. Note: This

setting determines the user context and the rights for what resources your

component can access, regardless of the client. Save and exit this dialog.

6. Go down one more level to Components and click

the New icon.

7. Click Install New Components and select

MTSSserver.dll which we just created above. If you aren't using the new

multi-threaded runtime, also add the MTSDemos.tlb file. Click OK.

8. In the component's Properties, set its

Transaction options. If you don't use distributed transactions through DTC, set

the "Does not support Transactions" option, which will result in

slightly faster performance than the

other options. Use "Supports Transactions" if you have a

mixed-request load. Typically you'll want to set this to "Supports

Transactions" The figure below

shows how the installed component should look inside MTS.

Once the component is

registered with MTS, nothing changes in the way the client calls the object, so

re-run the previous code but bump the timeout to a higher number so we can see

things happening:

o = CREATEOBJECT("MTSDemos.MTSDemo")

? o.SlowHit(15)

Run this code from VFP

and then switch back to the Transaction Server Manager. You'll notice that the

icon for your object is now spinning, which means it is active and "in

call" or in context. Click the detail view and you'll see one active

object and one in-call object while your server continues to wait for

completion. Once the method completes and the code executes the SetComplete()

method call on the Context object, the in-call counter goes down by one,

leaving you with one active object reference and no objects that are in call.

This status display is quite useful when figuring out how MTS works.

If you create another

object in your VFP application like this:

o2 = CREATEOBJECT("MTSDemos.MTSDemo")

the active object count

will go up by one. Because the Visual FoxPro client is single threaded, you

can't run two simultaneous methods from the single VFP client. But you can

simulate the process by firing up another copy of VFP, loading instances from

there, and running several SlowHit calls simultaneously in each open copy of

VFP. These, too, will show up as active instances and stick around. If you now

run a SlowHit in each instance of Visual FoxPro, MTS will show two active

in-call objects. This demonstrates that the MTS package is indeed 'global' to

the system rather than the application that you're running.

A more typical MTS

example, however, is being called from a multi-threaded client like IIS and

Active Server Pages. You can simulate the same scenario by creating a pair of

ASP pages, using Server.CreateObject() to create instances of your servers, and

then running them simultaneously—you'll see them show up as in-call objects

while processing. In short, all your object instantiations run through MTS

regardless of which process (IIS, VFP) created the object.

Notice that if you

create an object from the command window, it will immediately show as

activated. Once you make the SlowHit method call and it completes, the object

is no longer shown as activated! Yet, if you go back and re-run slow hit on

your existing object reference, it works just fine. What's going on here?

That's Just In Time Activation in action. The

above code creates an object, but doesn't call SetComplete() immediately after

creating the object in the Init. Until you do, the object is assumed to have

state and cannot be released by MTS. As soon as you call SetComplete(), MTS is

free to unload your object. The VFP client app, however, doesn't know that the

object was unloaded and assumes it is still active with the app still holding a

reference. But the actual reference is to the MTX proxy, rather than the real

object, which the package has unloaded. When you make the next interface call

on the object, MTS re-creates the actual object and services the request from

this newly created instance.

As you can see, this

requires your object to be stateless. If state was maintained between method

calls, all properties would clear, and only the startup properties would be

provided. If you have to keep state from one method call to another, be sure not to call SetComplete() or SetAbort()

in the client code, in which case the object will not be unloaded by MTS. The

object stays loaded and dedicated to your client, showing as activated but not

in call while between method calls.

Let's look a little

closer at what's happening behind the scenes here. To do so, we need to use the

WriteOutput() method I mentioned earlier. When called, WriteOutput()sends

output to a temporary file; the purpose of this exercise is to see how MTS

loads objects. Run the following example code against the object hosted in MTS:

o = CREATEOBJECT("MTSDemos.MTSDemo")

? o.SlowHit(1)

WAIT WINDOW

? o.SlowHit(2)

WAIT WINDOW

? o.SlowHit(3)

The Write() method

creates a new file with a SYS(2015)-generated tag to keep the files separate.

It does so once per object instance creation by using the hFile file handle at

the object level. For hFile to be valid, it has to maintain state—otherwise a

new file will be created. After running these four lines of code, you'll find

that three temporary files were

created in your TEMP directory. Each file contains the following information:

Init Fired

SlowHit Fired3

Destroy Fired

This demonstrates

clearly that MTS does not actually cache object references, but rather

re-creates your object from scratch on each method call that calls SetComplete

or SetAbort! This means Init and fire on each method call that uses SetComplete

and SetAbort. In addition to being stateless, your object also has to be

lightweight in terms of how it instantiates and destroys, in order to be

efficient. If you have lots of Init code or many properties that are

initialized when the object is created, you'll incur a significant amount of

overhead on each call to the MTS-controlled object.

Remember, Just In Time

Activation unloads objects after the method call completes and a call to

SetComplete() or SetAbort() is made in the method.

Now, go ahead and remove

the call to SetComplete() from the Slowhit method and recompile the server.

Note, that if you make the change and recompile the server you'll get a 'File

Access Denied' error. This is because MTS is actually holding a reference to

your object even though the reference count has gone to 0. To unload the object

bring up the package in the Management Console and right click, then choose

Shut Down. This function unloads all objects that are loaded inside of the

package. Now recompile your component. When you recompile a component it is

built from VFP as a pure COM component and the connection to the MTS package is

lost at that point. If you were to instantiate your object at this point you'd

find that the object is no longer running in MTS. To reattach the component to

the package, go the Management console, select the package, then the components

folder and right click. Choose Refresh from

the shortcut menu, which rereads the package components into the registry.

Ok, your object is now

ready to test again, this time without committing the MTS transaction. Create

the MTSDemos object, then call the SlowHit() method 3 times in a row and

release the object. Your WriteOutput() method will create the following output

for the three method calls above:

Init Fired

SlowHit Fired1

SlowHit Fired2

SlowHit Fired3

Destroy Fired

As you can see without

the committed transaction, the object is instantiated and remains loaded for

each of the method calls until you actually release the object. This is very

similar to the way an object behaves when it's not running through MTS – in

other words it's stateful.

I consider this unloading/reloading

when using MTS transactions to be extremely inefficient behavior, even for

lightweight objects.

Consider a typical Web

page that uses an ASP COM component written in VFP. In typical scenarios you'll

call several methods from a single page. Let's assume for a moment that you

make three method calls on your server for the ASP page. If you followed the

MTS model properly and created transactable methods that SetComplete or

SetAbort to release state, your COM object will load and unload 3 times for

this single ASP page! Compare with a single creation when you don't run the

component through MTS.

Rather than re-creating

objects on each hit, these object references should be pooled and reused,

especially if objects are truly stateless. But that's not how MTS currently

works, so you'll have to put up with the extra overhead it imposes.

Unfortunately, this is

the model that Microsoft recommends for MTS methods implemented by components

where each operation (ie. self-contained method) should be transacted. A more

efficient yet less elegant approach might be to control transaction management

from the client layer of the application by making the method calls required

for a request and then calling SetComplete/SetAbort as needed when everything

has been completed. ASP supports MTS transactions internally, or you can simply

add methods to your COM components that wrap the Context object SetAbort() and

SetComplete() methods so they can be accessed externally. The problem with this

approach is that now the client application has to have specific knowledge

about how the component works in order to make these calls – it diminishes the

usefulness of a middle tier server significantly.

Frankly, JITA doesn't

make a lot of sense for Web applications that come from a multi-threaded

client. The reason is that in this scenario ASP or other middleware Web

software manages the objects efficiently on its own. For example, ASP loads

servers at the top of the page then releases them at the end. In this respect

servers are stateless in their own right without ever dealing with MTS. If you

now add MTS into the ASP mix, you simply add overhead to that equation by

adding the Out Of Process call, plus all of the loading unloading required on

multiple method calls on the same object. The same applies to tools like Web

Connection and FoxISAPI which in turn use their own very efficient pool

managers that actually pool server instances without unloading. These managers

are much more efficient than MTS and also more efficient than ASP because

there's no overhead for reloading any instances on each Web hit. Servers must

still be stateless because the state may vary between requests, but in this

case no assumption about the state of the server is made on each hit – it's

reestablished on each hit.

Object pooling is

something that is currently missing from MTS 2.0 shipping on NT 4. Object

pooling is planned for MTS 5.0 but it's unclear at this time exactly what types

of COM objects will be able to run in the MTS object pool.

So, where does JITA make

sense? Consider servers that have large numbers of connected clients that need

to hold connections to servers for long periods of time. A typical example, is

an application server that's called from client workstations. In this scenario

hundreds of users may be connected at any time. The application creates an

object reference to a server once then holds on to it for a long period of time

most likely over a network DCOM connection. If the components are MTS compatible

and can release state, objects will actually unload on the server, while the

client still perceives an active connection to the server. MTS has unloaded the

object, but as soon as the client requests another method or property access

MTS automatically reloads the object – the client never knows that the object

wasn't loaded all of the idle time in between. So, instead of running hundreds

or thousands of instances of the COM object, on a few are probably active at

any point in time. Furthermore, because the object is loaded and managed on the

server the performance of accessing that object is much better than a reconnect

via pure DCOM over the network would incur. This is because the connection with

MTS proxy remains, and only the MTS package reloads the object! This near

invokation can provide significant performance gains especially when objects

would otherwise have to be reloaded.

Other scenarios include,

using ASP Sessions. If you have to build stateful ASP applications that must

manage users through a site you can assign a COM reference to an ASP session.

Without MTS you may have 1000s of simultaneous objects running but with MTS the

objects load only when an actual method call is made. This is not a very

scalable setup even with MTS, but compared to not using MTS at all there's a

difference between running several hundred objects with MTS vs. just a handful

without.

Look carefully at

whether JITA makes sense – in most typical Web application scenarios it does

not. For large network COM applications with many clients it may be a huge

boon.

One of the handiest features of MTS is its distributed SQL transaction support through application/component code rather than through database transactions. The bottom line to this feature is that you get much easier transaction support that also works across multiple databases and even entirely different database products – say SQL Server and Oracle. In other words, you can create a transaction that writes data in both of these databases and control it with a single code module or even span it across multiple COM object calls. MTS wraps the Distributed Transaction Monitor, which works against OleDB/ODBC datasources. If you've ever needed to code two-phase commit code yourself with stored procedures you know how difficult this can be as you have to actually program the DTC directly via API calls or special SQL commands. Using MTS transactions makes this process relatively painless using the same SetComplete/SetAbort method calls to commit or abort transactions.

For VFP transactions I suggest you implement a base class that implements the three basic methods that are required for handling MTS transactions. It may not always possible to implement a base class from your business objects which may already be inheriting from other classes – in that case you can add these methods in the appropriate level of the class hierarchy:

¨

GetObjectContext()

Retrieves an MTS context object which indicates that the object wants to

become stateful. If you want to be part of a SQL transaction you also have to

call EnableCommit which is automated

by calling this method with a .t. parameter. This method returns an object

reference to the MTS context object.

¨

SetComplete()

Indicates that the object is to go stateless again indicating success. If EnableCommit was called and transactions

were active the SQL transactions are committed. This method simply wraps the

MTS SetComplete method, but also adds a NULL check so this doesn't have to be

done in any client calling code.

¨

SetAbort()

Same as SetComplete except that any SQL transactions are aborted.

Understand that SetAbort's behavior in a non-transaction environment (ie. where

no SQL transactions are involved) really is no different than SetComplete().

The class code looks like this:

*************************************************************

DEFINE CLASS MTSBASE AS Relation

*************************************************************

*** Function: Wrapper class for MTS transaction objects

*** Suggest you implement this code even on

*** objects that can't inherit from this.

*************************************************************

*** Custom Properties

oContext = .NULL.

oMTS = .NULL.

cErrorMsg = ""

************************************************************************

* MTSDemo :: GetObjectContext

*********************************

*** Function: Gets a reference to MTS Context objects

*** Assume: Sets the THIS.oContext property

*** Pass: llTransaction - If this object uses real SQL

*** transactions set this flag to .t.

*** Return: Context Object or .NULL. on failure

************************************************************************

FUNCTION GetObjectContext

LPARAMETERS llSQLTransaction

THIS.oMTS = CREATEOBJECT("MTXAS.AppServer.1")

IF ISNULL(THIS.oMTS)

RETURN .NULL.

ENDIF

THIS.oContext = THIS.oMTS.GetObjectContext()

IF ISNULL(THIS.oContext)

RETURN .NULL.

ENDIF

IF llTransaction

THIS.oContext.EnableCommit()

ENDIF

RETURN THIS.oContext

ENDFUNC

* MTSDemo :: GetObjectContext

************************************************************************

* MTSDemo :: SetComplete

*********************************

*** Function: Wrapper around oObjectContext.SetComplete to handle

*** NULLS.

************************************************************************

FUNCTION SetComplete

IF !ISNULL(THIS.oContext)

THIS.oContext.SetComplete()

ENDIF

ENDFUNC

* MTSDemo :: SetComplete

************************************************************************

* MTSDemo :: SetAbort

*********************************

*** Function: Wrapper around oObjectContext.SetComplete to handle

*** NULLS.

************************************************************************

FUNCTION SetAbort

IF !ISNULL(THIS.oContext)

THIS.oContext.SetAbort()

ENDIF

ENDFUNC

* MTSDemo :: SetAbort

ENDDEFINE

To demonstrate how Transactions work against database updates let's look at a simple if not very useful example. To keep things simple I'll use the SQL Server Pubs database with a single table Authors. The following code runs two SQL UPDATE statements, which can either be committed or updated depending on the parameters passed into the method. While this example uses a single database and table realize that you could write code that does the same thing with two completely separate databases (ie one update to a SQL Server table, one to an Oracle table) with exactly the same results:

DEFINE CLASS MTSDemo AS MTSBase OLEPUBLIC

************************************************************************

* MTSDemo :: ModiAuthor

*********************************

*** Function: Demonstrates that you can modify any DTC database

*** and abort all changes without using transactions.

*** This simple example writes two changes and either

*** writes them or aborts them based on the llFail flag.

*** Real Uses will involve multiple tables or even

*** multiple databases (SQL and Oracle)

*** Assume: Doesn't work against Fox Data

*** Pass: lcID - Pubs Author ID (optional)

*** lcPhone - Phone number to set to

*** llFail - If .T. Abort the Transaction otherwise

*** write

*** Return: .T. or .F.

************************************************************************

FUNCTION TransactAbortDemo

LPARAMETER lcID, lcPhone, llFail

lcID=IIF(EMPTY(lcID),"213-46-8915",lcID)

lcPhone=IIF(EMPTY(lcPhone),"123",lcPhone)

*** Get Object Context with Transactions

THIS.GetObjectContext(.T.)

*** wwSQL is a SQL Passthrough wrapper class

oSQL = CREATE("wwSQL")

IF !oSQL.Connect("Pubs")

THIS.cErrorMsg = oSQL.cErrorMsg

RETURN THIS.SetTransaction(.T.)

ENDIF

lnResult = oSQL.Execute("Update authors "+ ;

" set phone = '" + lcPhone + "' " + ;

" where au_id = '" + lcId + "' " )

IF lnResult < 1

THIS.cErrorMsg = oSQL.cErrorMsg

RETURN THIS.SetTransaction(.T.)

ENDIF

lcID = "172-32-1176" && Just a valid record in the Authors table

lnResult = oSQL.Execute("Update authors " + ;

" Set phone = '" + lcPhone + "'" + ;

" where au_id = '" + lcId + "' " )

IF lnResult < 1

THIS.cErrorMsg = oSQL.cErrorMsg

RETURN THIS.SetTransaction(.T.)

ENDIF

*** Success

IF llFail

THIS.SetAbort()

RETURN .F.

ELSE

THIS.SetComplete()

RETURN .T.

ENDIF

ENDFUNC

ENDDEFINE

To run this code make sure that you build the server and then add it into MTS as a component. This example runs against the SQL Server Pubs database, so you need to also make sure that you have a DSN called Pubs set up in the ODBC manager. Since we're using SQL Server here I suggest you bring up the SQL Server Enterprise manager and run the following query in its query tool to see the data:

SELECT * FROM Authors

This will allow you to see the data as it changes (or doesn't change on aborted transactions). In VFP you can now run this sample with:

o=create("MTSDemos.MTSDemo")

? o.TransactAbortDemo(,"999",.F.)

? o.cErrorMsg

The first parameter is the name of a Social Security number you want to modify. The second is the value of the phone number to set to and the third is a flag that determines whether you want to force the transaction to fail on purpose (simulating a problem).

If you run this with the flag left to .F. or no parameter passed the two updates in the example go through and the changes are applied to the database. Do this first to validate that your SQL syntax is working correctly. Then change the value for the phone number above to something else from the "999" and set the flag to .T. This time around you'll find that the data in the table does not change because the transaction was aborted!

So what? This would be easy enough to do with regular SQL code, right? Well, look at the code again! Realize that in order to abort both SQL commands all I have to do is call SetAbort(). There are no SQL transactions involved at all – no special BEGIN TRANSACTION/COMMIT/REVERT code to deal with in Transact SQL of a stored procedure or SQLExecute() call. Furthermore, remember this is an overly simple example. This very same code will work against multiple databases, for which it would be impossible to write Transact SQL spanning two databases especially if they are SQL Server and Oracle for example.

This functionality lets you write code at the COM component level in your middle tier or business logic layers of the application rather than being forced to write them at the SQL Script level. Writing the code at the higher level provides much better abstraction since the transaction logic is also database backend independent. Moreover, the DTC code automatically handles the updates against totally different backends, which is extremely difficult to program otherwise.

It's also possible to spread logic over multiple COM objects by using the Context.CreateInstance() method to create new components that run within the same transaction context as the currently executing transaction. This allows for unlimited nesting of transaction logic. If at any point SetAbort() is called all the data is rolled back.

If you use SQL transactions you can also monitor the status of the Distributed Transaction Monitor right inside of IIS:

There are two kinds of security to set up with MTS: package security as configured by the identity of the package and role-based security for actual security-checking from within the code. The package identity determines which user account is impersonated when the components in the package run. In other words, the package has access to all resources that the specified user has access to. All the components running within the package automatically inherit these rights and this user account. This is a boon especially for applications that run in IIS. As you may know IIS always uses IUSR_Machinename as the anonymous user account which COM objects loaded from IIS automatically inherit. This can be a problem because this account has no rights and there's no way to change this user account! By using MTS you can override the security which changes impersonation as soon as its accessed by the COM client. This is very similar in the way that DCOMCNFG allows configuration of a EXE COM component's user identity – in fact that's exactly what MTS does behind the scenes for the package wrapper which is simply an EXE COM server.

When you set up a user id you can choose either Interactive User, which is a good choice for standalone applications and even most Web applications, or a specific user account.

One of the nicest

features of MTS is its ability to check for security via roles, independent of

a user's login ID. MTS allows you to map users to an MTS role and lets you

easily check for this role through code. The user in question will be the

client that is calling your application, typically the Interactive User in

standalone applications, or the IUSR_ account when calling from a Web

application. Client applications can check who is accessing them by configuring

roles for specific users and then checking for the role with the IsCallerInRole

method:

IF loContext.IsCallerInRole("BookReader")

THIS.WriteOutput("Caller is in role")

ENDIF

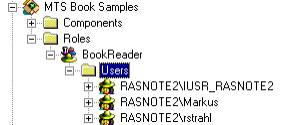

To add roles, use the

management controls to add a role and then add users to the role as needed. The

figure below shows the setup of BookReader users.

Role-based security

comes in very handy when you need to check user identities closely inside a

component. For Web applications, most requests come in as generic users on the

IUSR_ account, but through Authentication (basic Authentication or NT

Passthrough security it's possible to pass user account information down to

components to be checked by roles.

Whew. That was a lot of

info and still I haven't touched on all of the functionality that MTS provides.

MTS is fairly strategic to Microsoft both in terms of marketing (currently this

appears to be the primary reason of the MTS push) as well as the scalability

push that will be provided by new features in Windows 2000. In Windows 2000 MTS

will help take advantage of many of the advanced scalability features without

having to understand and know every detail of their implementation behind the

scenes. This is a typical middle tier implementation feature and MTS fits this

bill nicely. It remains to be seen how well Microsoft will succeed at this

ambitious goal set for the release of Windows 2000 – much of this is unclear

even now that Beta 3 of Win2000 is under way…

However, if you're

looking for big scalability gains from MTS today, you'll be very disappointed.

MTS can provide enhancements in some scalability scenarios especially those

that need to deal with lots of simultaneously and continuously connected

clients, but this is not a common scenario especially in the Web environment

which is so popular today for large scale applications. MTS also doesn't

currently provide any features for scaling beyond a single machine which is a

mystery to me…

Regardless, I think MTS

is important enough to understand now. MTS will be very tightly integrated with

the Windows 2000 COM+ system and the line between 'plain' COM and MTS COM

objects will start to blur considerably as MTS features will be merged directly

into the base COM system.

Keep in mind that MTS is

also useful beyond scalability. The distributed transaction support is one

feature that is priceless in terms of ease of implementation as is the

packaging and role-based security implementation.

Weigh the benefits

carefully of function versus performance carefully. This is much more important

for server applications than others where performance is a crucial issue for

success or failure of an application.

Rick Strahl is an independent developer in Maui, Hawaii. His company West Wind Technologies specializes in Internet application development and tools focused on Internet Information Server, ISAPI, C++ and Visual FoxPro. Rick is author of West Wind Web Connection, a powerful Web application framework for Visual FoxPro, West Wind HTML Help Builder, co-author of Visual WebBuilder, a Microsoft Most Valuable Professional, and a frequent contributor to FoxPro magazines and books. His new book "Internet Applications with Visual FoxPro 6.0", was published April 1999 by Hentzenwerke Publishing. You can contact Rick at: http://www.west-wind.com/.